Amazon S3 Audit

Amazon Simple Storage Service (S3) provides a simple web services interface that can be used to store and retrieve any amount of data from anywhere on the web. The Sumo Logic App for Amazon S3 Audit presents details from access logs that contain information about the request type, the average response time, and the inbound and outbound data volume.

Log types

Amazon S3 Audit uses Server Access Logs (activity logs). For more information, see Amazon S3 server access log format.

Sample log messages

The server access log files consist of a sequence of new-line delimited log records. Each log record represents one request and consists of space delimited fields. The following is an example log consisting of six log records.

79a59df900b949e55d96a1e698fbacedfd6e09d98eacf8f8d5218e7cd47ef2be mybucket [06/Feb/2014:00:00:38 +0000] 192.0.2.3 79a59df900b949e55d96a1e698fbacedfd6e09d98eacf8f8d5218e7cd47ef2be 3E57427F3EXAMPLE REST.GET.VERSIONING - "GET /mybucket?versioning HTTP/1.1" 200 - 113 - 7 - "-" "S3Console/0.4" - 79a59df900b949e55d96a1e698fbacedfd6e09d98eacf8f8d5218e7cd47ef2be mybucket [06/Feb/2014:00:00:38 +0000] 192.0.2.3 79a59df900b949e55d96a1e698fbacedfd6e09d98eacf8f8d5218e7cd47ef2be 891CE47D2EXAMPLE REST.GET.LOGGING_STATUS - "GET /mybucket?logging HTTP/1.1" 200 - 242 - 11 - "-" "S3Console/0.4" - 79a59df900b949e55d96a1e698fbacedfd6e09d98eacf8f8d5218e7cd47ef2be mybucket [06/Feb/2014:00:00:38 +0000] 192.0.2.3 79a59df900b949e55d96a1e698fbacedfd6e09d98eacf8f8d5218e7cd47ef2be A1206F460EXAMPLE REST.GET.BUCKETPOLICY - "GET /mybucket?policy HTTP/1.1" 404 NoSuchBucketPolicy 297 - 38 - "-" "S3Console/0.4" - 79a59df900b949e55d96a1e698fbacedfd6e09d98eacf8f8d5218e7cd47ef2be mybucket [06/Feb/2014:00:01:00 +0000] 192.0.2.3 79a59df900b949e55d96a1e698fbacedfd6e09d98eacf8f8d5218e7cd47ef2be 7B4A0FABBEXAMPLE REST.GET.VERSIONING - "GET /mybucket?versioning HTTP/1.1" 200 - 113 - 33 - "-" "S3Console/0.4" - 79a59df900b949e55d96a1e698fbacedfd6e09d98eacf8f8d5218e7cd47ef2be mybucket [06/Feb/2014:00:01:57 +0000] 192.0.2.3 79a59df900b949e55d96a1e698fbacedfd6e09d98eacf8f8d5218e7cd47ef2be DD6CC733AEXAMPLE REST.PUT.OBJECT s3-dg.pdf "PUT /mybucket/s3-dg.pdf HTTP/1.1" 200 - - 4406583 41754 28 "-" "S3Console/0.4" - 79a59df900b949e55d96a1e698fbacedfd6e09d98eacf8f8d5218e7cd47ef2be mybucket [06/Feb/2014:00:03:21 +0000] 192.0.2.3 79a59df900b949e55d96a1e698fbacedfd6e09d98eacf8f8d5218e7cd47ef2be BC3C074D0EXAMPLE REST.GET.VERSIONING - "GET /mybucket?versioning HTTP/1.1" 200 - 113 - 28 - "-" "S3Console/0.4" -

Sample queries

| parse "* * [*] * * * * * \"* HTTP/1.1\" * * * * * * * \"*\" *" as bucket_owner, bucket, time, remoteIP, requester, request_ID, operation, key, request_URI, status_code, error_code, bytes_sent, object_size, total_time, turn_time, referrer, user_agent, version_ID

| parse regex field=operation "[A-Z]+\.(?<operation>[\w.]+)"

| count by operation

Collecting logs for the Amazon S3 Audit app

Amazon Simple Storage Service (S3) provides a simple web services interface that can be used to store and retrieve any amount of data from anywhere on the web.

This topic details how to collect logs for Amazon S3 Audit and ingest them into Sumo Logic.

Once you begin uploading data, your daily data usage will increase. It's a good idea to check the Account page in Sumo Logic to make sure that you have enough quota to accommodate additional data in your account. If you need additional quota you can upgrade your account at any time.

Prerequisites

Before you can begin to collect logs from an S3 bucket, perform the following steps:

- Enable logging via the AWS Management Console.

- Confirm that logs are being delivered to an S3 bucket.

- Grant Sumo Logic Access to the Amazon S3 Bucket.

Configure a Collector

In Sumo Logic, configure a Hosted Collector.

Configure an S3 Audit Source

When you create an AWS Source, you'll need to identify the Hosted Collector you want to use or create a new Hosted Collector. Once you create an AWS Source, associate it with a Hosted Collector. For instructions, see Configure a Hosted Collector.

Rules

- If you're editing the

Collection should begindate on a Source, the new date must be after the currentCollection should begindate. (Note that if you set this property to a collection time that overlaps with data that was previously ingested on a source, it may result in duplicated data to be ingested into Sumo Logic.) - Sumo Logic supports log files (S3 objects) that do NOT change after they are uploaded to S3. Support is not provided if your logging approach relies on updating files stored in an S3 bucket. S3 does not have a concept of updating existing files, you can only overwrite an existing file. When this overwrite happens, S3 considers it as a new file object, or a new version of the file, and that file object gets its own unique version ID.

- Sumo Logic scans an S3 bucket based on the path expression supplied, or receives an SNS notification when a new file object is created. As part of this, we receive a file name (key) and the object's ID. It's compared against a list of file objects already ingested. If a matching file ID is not found the contents of the file are ingested in full.

- When you overwrite a file in S3, the file object gets a new version ID and as a result, Sumo Logic sees it as a new file and ingests all of it. If with each version you post to S3 you are simply adding to the end of the file, then this will lead to duplicate messages ingested, one message for each version of the file you created in S3.

- Glacier objects will not be collected and are ignored.

- If you're using SNS you need to create a separate topic and subscription for each Source.

S3 Event Notifications Integration

The Sumo Logic S3 integration combines scan-based discovery and event-based discovery into a unified integration that gives you the ability to maintain a low-latency integration for new content and provide assurances that no data was missed or dropped.

When you enable event-based notifications, S3 will automatically publish new files to Amazon Simple Notification Service (SNS) topics, which Sumo Logic can be subscribed. This notifies Sumo Logic immediately when new files are added to your S3 bucket so we can collect it. For more information about SNS, see the Amazon SNS product docs.

![]()

Enabling event-based notifications is an S3 bucket-level operation that subscribes to an SNS topic. An SNS topic is an access point that Sumo Logic can dynamically subscribe to in order to receive event notifications. When creating a Source that collects from an S3 bucket, Sumo Logic assigns an endpoint URL to the Source. The URL is for you to use in the AWS subscription to the SNS topic so AWS notifies Sumo when there are new files. See Configuring Amazon S3 Event Notifications for more information.

You can adjust the configuration of when and how AWS handles communication attempts with Sumo Logic. See Setting Amazon SNS Delivery Retry Policies for details.

Create an AWS Source

These configuration instructions apply to log collection from all AWS Source types.

- Classic UI. In the main Sumo Logic menu, select Manage Data > Collection > Collection.

New UI. In the Sumo Logic main menu select Data Management, and then under Data Collection select Collection. You can also click the Go To... menu at the top of the screen and select Collection. - On the Collectors page, click Add Source next to a Hosted Collector, either an existing Hosted Collector, or one you have created for this purpose.

- Select your AWS Source type.

- Enter a name for the new Source. A description is optional.

- Select an S3 region or keep the default value of Others. The S3 region must match the appropriate S3 bucket created in your Amazon account.

Selecting an AWS GovCloud region means your data will be leaving a FedRAMP-high environment. Use responsibly to avoid information spillage. See Collection from AWS GovCloud for details.

- For Bucket Name, enter the exact name of your organization's S3 bucket. Be sure to double-check the name as it appears in AWS, for example:

- For Path Expression, enter the wildcard pattern that matches the S3 objects you'd like to collect. You can use one wildcard (

*) in this string. Recursive path expressions use a single wildcard and do NOT use a leading forward slash. See About Amazon Path Expressions for details. - Collection should begin. Choose or enter how far back you'd like to begin collecting historical logs. You can either:

- Choose a predefined value from dropdown list, ranging from "Now" to “72 hours ago” to “All Time”, or

- Enter a relative value. To enter a relative value, click the Collection should begin field and press the delete key on your keyboard to clear the field. Then, enter a relative time expression, for example

-1w. You can define when you want collection to begin in terms of months (M), weeks (w), days (d), hours (h), and minutes (m). If you paused the Source and want to skip some data when you resume, update the Collection should begin setting to a time after it was paused.noteIf you set Collection should begin to a collection time that overlaps with data that was previously ingested on a source, it may result in duplicated data to be ingested into Sumo Logic.

- For Source Category, enter any string to tag the output collected from this Source. Category metadata is stored in a searchable field called

_sourceCategory. Some examples:_sourceCategory: aws/observability/alb/logsor_sourceCategory: aws/observability/clb/logs. - Fields. Click the +Add Field link to add custom log metadata Fields. Define the fields you want to associate, each field needs a name (key) and value. The following Fields are to be added in the source:

- Add an account field and assign it a value which is a friendly name / alias to your AWS account from which you are collecting logs. Logs can be queried via the “account field”.

- Add a region field and assign it the value of respective AWS region where the Load Balancer exists.

- Add an accountId field and assign it the value of the respective AWS account id which is being used.

A green circle with a check mark is shown when the field exists and is enabled in the Fields table schema.

A green circle with a check mark is shown when the field exists and is enabled in the Fields table schema. An orange triangle with an exclamation point is shown when the field doesn't exist, or is disabled in the Fields table schema. In this case, you'll see an option to automatically add or enable the nonexistent fields to the Fields table schema. If a field is sent to Sumo Logic but isn’t present or enabled in the schema, it’s ignored and marked as Dropped.

An orange triangle with an exclamation point is shown when the field doesn't exist, or is disabled in the Fields table schema. In this case, you'll see an option to automatically add or enable the nonexistent fields to the Fields table schema. If a field is sent to Sumo Logic but isn’t present or enabled in the schema, it’s ignored and marked as Dropped.

- For AWS Access, choose between the two Access Method options below, based on the AWS authentication you are providing.

- For Role-based access, enter the Role ARN that was provided by AWS after creating the role. Role-based access is recommended (this was completed in the prerequisite step Grant Sumo Logic access to an AWS Product).

- For Key access, enter the Access Key ID and Secret Access Key. See AWS Access Key ID and AWS Secret Access Key for details.

- Log File Discovery. You have the option to set up Amazon Simple Notification Service (SNS) to notify Sumo Logic of new items in your S3 bucket. A scan interval is required and automatically applied to detect log files.

- Scan Interval. Sumo Logic will periodically scan your S3 bucket for new items in addition to SNS notifications. Automatic is recommended to not incur additional AWS charges. This sets the scan interval based on if subscribed to an SNS topic endpoint and how often new files are detected over time. If the Source is not subscribed to an SNS topic and set to Automatic the scan interval is 5 minutes. You may enter a set frequency to scan your S3 bucket for new data. To learn more about Scan Interval considerations, see About setting the S3 Scan Interval.

- SNS Subscription Endpoint (recommended option). New files will be collected by Sumo Logic as soon as the notification is received. This will provide faster collection versus having to wait for the next scan to detect the new file. We highly recommend using an SNS Subscription Endpoint for its ability to maintain low-latency collection. This is essential to support up-to-date Alerts. The following steps use the AWS SNS Console. (Alternatively, you can use AWS CloudFormation; see Using CloudFormation to Set Up an SNS Subscription Endpoint).

- To set up the subscription, you need to get an endpoint URL from Sumo to provide to AWS. This process will save your Source and begin scanning your S3 bucket when the endpoint URL is generated. Click Create URL and use the provided endpoint URL when creating your subscription in step B.

- Go to Services > Simple Notification Service and click Create Topic. Enter a Topic name and click Create topic. Copy the provided Topic ARN, which you’ll need for the next step. Make sure that the topic and the bucket are in the same region.

- Again, go to Services > Simple Notification Service and click Create Subscription. Paste the Topic ARN from step B above. Select HTTPS as the protocol and enter the Endpoint URL provided while creating the S3 source in Sumo Logic. Click Create subscription and a confirmation request will be sent to Sumo Logic. The request will be automatically confirmed by Sumo Logic.

- Select the Topic created in step B and navigate to Actions > Edit Topic Policy. Use the following policy template, replace the

SNS-topic-ARNandbucket-nameplaceholders in theResourcesection of the JSON policy with your actual SNS Topic ARN and S3 Bucket name:{

"Version":"2008-10-17",

"Statement":[

{

"Effect":"Allow",

"Principal":{

"AWS":"*"

},

"Action":[

"SNS:Publish"

],

"Resource":"SNS-topic-ARN",

"Condition":{

"ArnLike":{

"aws:SourceArn":"arn:aws:s3:*:*:bucket-name"

}

}

}

]

} - Go to Services > S3 and select the bucket to which you want to attach the notifications. Navigate to Properties > Events > Add Notification. Enter a Name for the event notification. In the Events section select All object create events. In the Send to section (notification destination) select SNS Topic. An SNS section becomes available, select the name of the topic you created in step B from the dropdown. Click Save.

- Set any of the following under Advanced:

- Enable Timestamp Parsing. This option is selected by default. If it's deselected, no timestamp information is parsed at all.

- Time Zone. There are two options for Time Zone. You can use the time zone present in your log files, and then choose an option in case time zone information is missing from a log message. Or, you can have Sumo Logic completely disregard any time zone information present in logs by forcing a time zone. It's very important to have the proper time zone set, no matter which option you choose. If the time zone of logs cannot be determined, Sumo Logic assigns logs UTC; if the rest of your logs are from another time zone your search results will be affected.

- Timestamp Format. By default, Sumo Logic will automatically detect the timestamp format of your logs. However, you can manually specify a timestamp format for a Source. See Timestamps, Time Zones, Time Ranges, and Date Formats for more information.

- Enable Multiline Processing. See Collecting Multiline Logs for details on multiline processing and its options. This is enabled by default. Use this option if you're working with multiline messages (for example, log4J or exception stack traces). Deselect this option if you want to avoid unnecessary processing when collecting single-message-per-line files (for example, Linux system.log). Choose one of the following:

- Infer Boundaries. Enable when you want Sumo Logic to automatically attempt to determine which lines belong to the same message. If you deselect the Infer Boundaries option, you will need to enter a regular expression in the Boundary Regex field to use for detecting the entire first line of multiline messages.

- Boundary Regex. You can specify the boundary between messages using a regular expression. Enter a regular expression that matches the entire first line of every multiline message in your log files.

- Create any Processing Rules you'd like for the AWS Source.

- When you're finished configuring the Source, click Save.

SNS with one bucket and multiple Sources

When collecting from one Amazon S3 bucket with multiple Sumo Sources, you need to create a separate topic and subscription for each Source. Subscriptions and Sumo Sources should both map to only one endpoint. If you were to have multiple subscriptions Sumo would collect your objects multiple times.

Each topic needs a separate filter (prefix/suffix) so that collection does not overlap. For example, the following image shows a bucket configured with two notifications that have filters (prefix/suffix) set to notify Sumo separately about new objects in different folders.

Update Source to use S3 Event Notifications

You can use this community-supported script to configure event-based object discovery on existing AWS Sources.

- Classic UI. In the main Sumo Logic menu, select Manage Data > Collection > Collection.

New UI. In the Sumo Logic main menu select Data Management, and then under Data Collection select Collection. You can also click the Go To... menu at the top of the screen and select Collection. - On the Collection page, navigate to your Source and click Edit. Scroll down to Log File Discovery and note the Endpoint URL provided, you will use this in step 12.C when creating your subscription.

- Complete steps 12.B through 12.E under Create an AWS Source > 12. Log File Discovery.

Troubleshoot S3 Event Notifications

In the Sumo Logic UI, under 'Log File Discovery', there is a red exclamation mark with the message 'Sumo Logic has not received a validation request from AWS'.

Steps to troubleshoot:

- Refresh the Source’s page to view the latest status of the subscription in the SNS Subscription section by clicking Cancel then Edit on the Source in the Collection tab.

- Verify you have enabled sending Notifications from your S3 bucket to the appropriate SNS topic. This is done in Create an AWS Source > 12. Log File Discovery > Step E.

- If you didn’t use CloudFormation, check that the SNS topic has a confirmed subscription to the URL in AWS console. A "Pending Confirmation" state likely means that you entered the wrong URL while creating the subscription.

In the Sumo Logic UI, under 'Log File Discovery', there is a green check with the message 'Sumo Logic has received an AWS validation request at this endpoint', but still high latencies.

The green check confirms that the endpoint was used correctly, but it does not mean Sumo Logic is receiving notifications successfully.

Steps to troubleshoot:

- AWS writes CloudTrail and S3 Audit Logs to S3 with a latency of a few minutes. If you’re seeing latencies of around 10 minutes for these Sources it is likely because AWS is writing them to S3 later than expected.

- Verify you have enabled sending Notifications from your S3 bucket to the appropriate SNS topic. This is done in the Fields step of Create an AWS Source.

Field Extraction Rules

Field Extraction Rules (FERs) tell Sumo Logic which fields to parse out automatically. For instructions, see Create a Field Extraction Rule.

Use the following Parse Expression:

parse "* * [*] * * * * * \"* HTTP/1.1\" * * * * * * * \"*\" *" as bucket_owner, bucket, time, remoteIP, requester, request_ID, operation, key, request_URI, status_code, error_code, bytes_sent, object_size, total_time, turn_time, referrer, user_agent, version_ID

Installing the Amazon S3 Audit app

Now that you have configured log collection for Amazon S3 Audit, install the Sumo Logic App for Amazon S3, and take advantage of predefined Searches and dashboards. The Sumo Logic App for Amazon S3 Audit presents details from access logs that contain information about the request type, the average response time, and the inbound and outbound data volume.

To install the app, do the following:

Next-Gen App: To install or update the app, you must be an account administrator or a user with Manage Apps, Manage Monitors, Manage Fields, Manage Metric Rules, and Manage Collectors capabilities depending upon the different content types part of the app.

- Select App Catalog.

- In the 🔎 Search Apps field, run a search for your desired app, then select it.

- Click Install App.

note

Sometimes this button says Add Integration.

- Click Next in the Setup Data section.

- In the Configure section of your respective app, complete the following fields.

- Field Name. If you already have collectors and sources set up, select the configured metadata field name (eg _sourcecategory) or specify other custom metadata (eg: _collector) along with its metadata Field Value.

- Click Next. You will be redirected to the Preview & Done section.

Post-installation

Once your app is installed, it will appear in your Installed Apps folder, and dashboard panels will start to fill automatically.

Each panel slowly fills with data matching the time range query received since the panel was created. Results will not immediately be available but will be updated with full graphs and charts over time.

Viewing Amazon S3 Audit dashboards

All dashboards have a set of filters that you can apply to the entire dashboard. Use these filters to drill down and examine the data to a granular level.

- You can change the time range for a dashboard or panel by selecting a predefined interval from a drop-down list, choosing a recently used time range, or specifying custom dates and times. Learn more.

- You can use template variables to drill down and examine the data on a granular level. For more information, see Filtering Dashboards with Template Variables.

- Most Next-Gen apps allow you to provide the scope at the installation time and are comprised of a key (

_sourceCategoryby default) and a default value for this key. Based on your input, the app dashboards will be parameterized with a dashboard variable, allowing you to change the dataset queried by all panels. This eliminates the need to create multiple copies of the same dashboard with different queries.

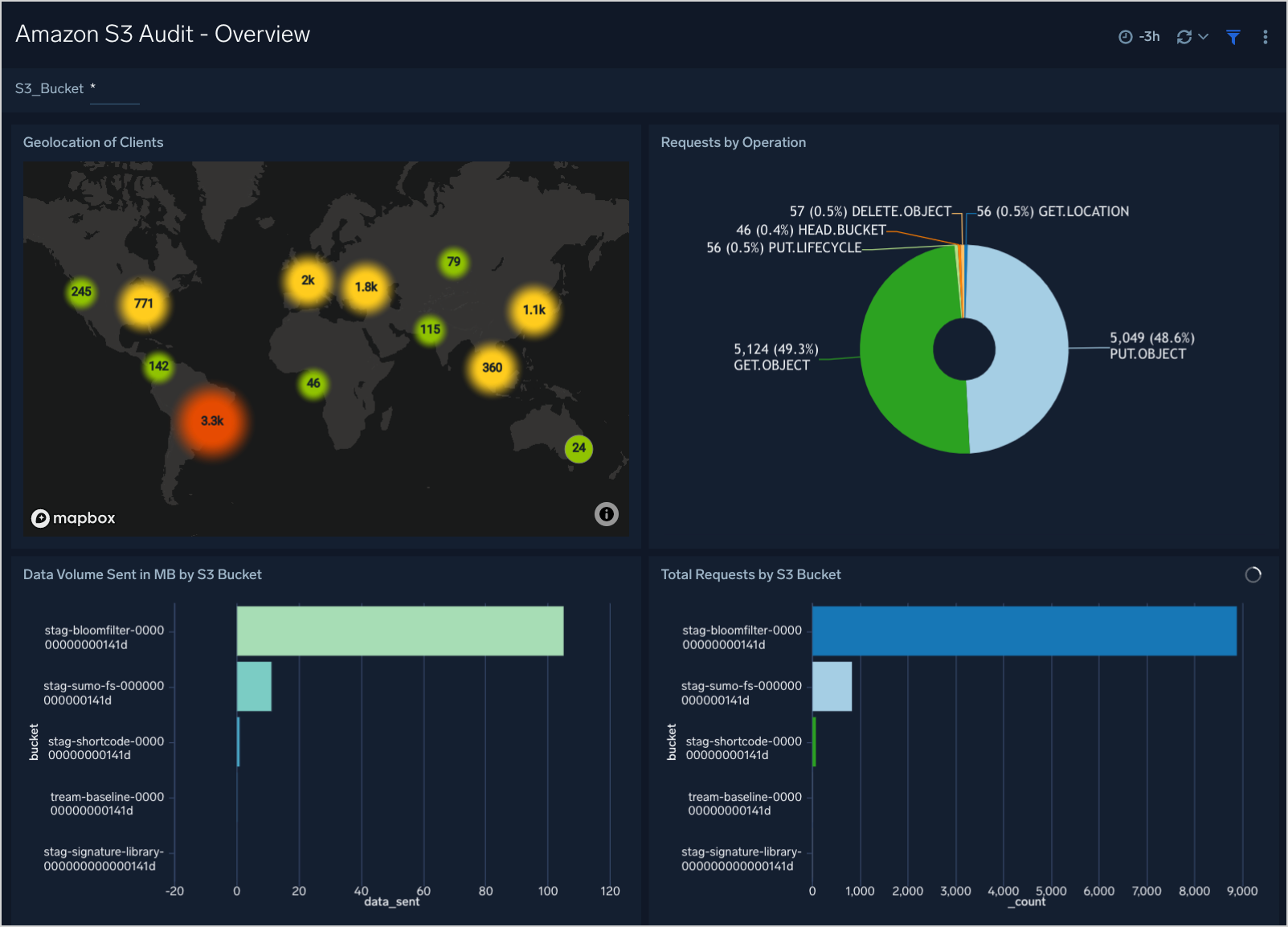

Overview

This dashboard provides the geolocation of S3 operations, requests performed, data volume sent, and total requests by S3 buckets.

Geolocation of Clients. Performs a geo lookup operation and displays the location of S3 bucket clients and the number of requests per bucket on a map of the world for the last three hours.

Requests by Operation. Displays the requests performed for the S3 bucket in a pie chart listed by operation type in a legend for the last three hours.

Data Volume Sent in MB by S3 Bucket. Shows the data volume per S3 bucket in megabytes, displayed in an bar chart for the last three hours.

Total Requests by S3 Bucket. Shows the total requests per S3 bucket, displayed in an bar chart for the last three hours.

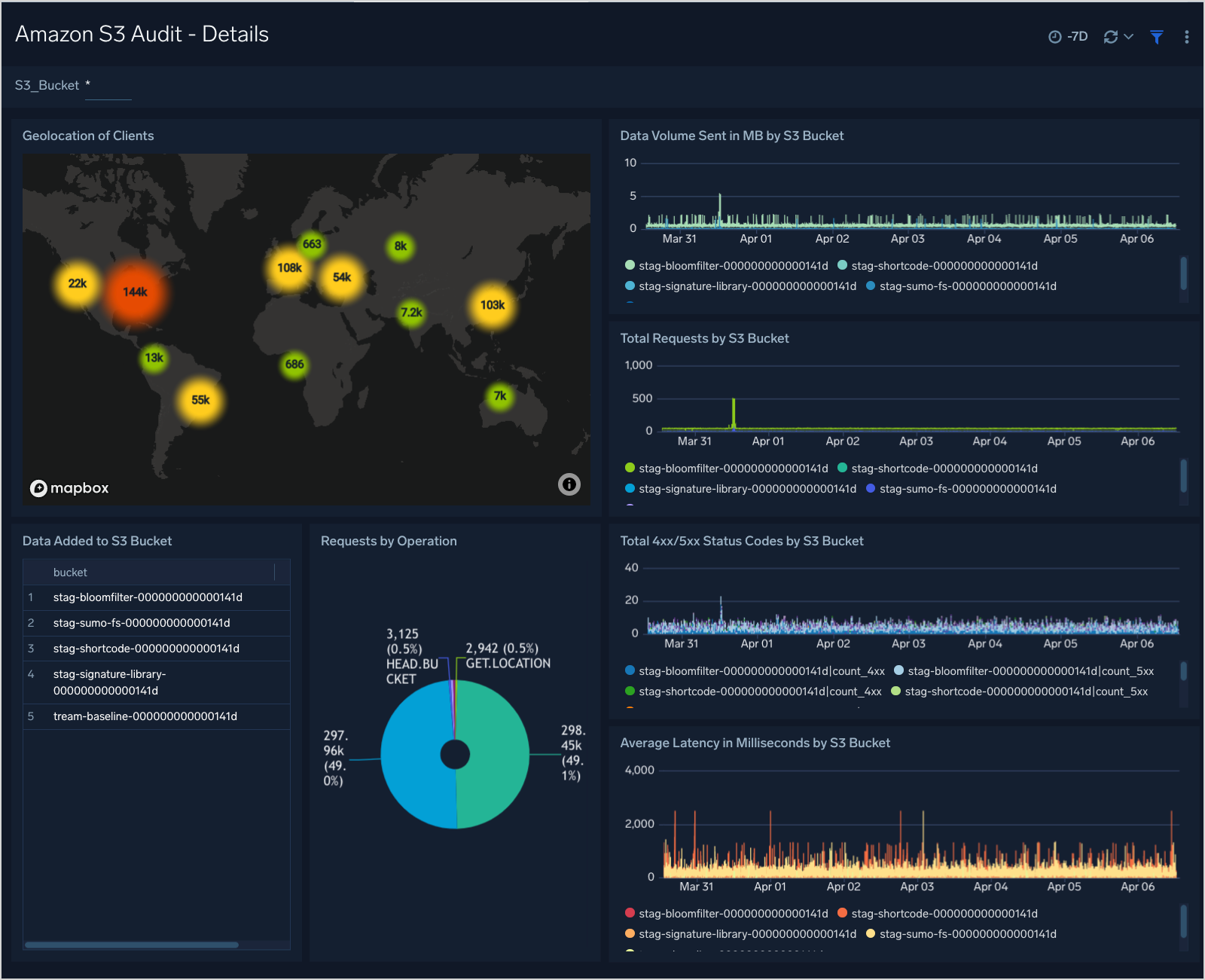

Details

This dashboard provides geolocation of s3 clients, data added, 4xx/5xx status codes, latency, and requests by S3 buckets.

Geolocation of Clients. Performs a geo lookup operation and displays the location of S3 bucket clients and the number of requests per bucket on a map of the world for the last three hours.

Data Volume Sent in MB by S3 Bucket. Shows the data volume per S3 bucket in megabytes, displayed in an area chart on a timeline for the last three hours.

Total Requests by S3 Bucket. Shows the total requests per S3 bucket, displayed in an area chart on a timeline for the last three hours.

Data Added to S3 Bucket. Lists the connected S3 bucket name and displays the amount of data loaded per bucket in megabytes in an aggregation table for the last three hours.

Requests by Operation. Displays the requests performed for the S3 bucket in a pie chart listed by operation type in a legend for the last three hours.

Total 4xx/5xx Status codes by S3 Bucket. Lists the total 4xx or 5xx error status codes by S3 bucket in a stacked column chart on a timeline for the last three hours.

Average Latency in Milliseconds by S3 Bucket. Displays the average latency time per S3 bucket in milliseconds in an area chart on a timeline for the last three hours.

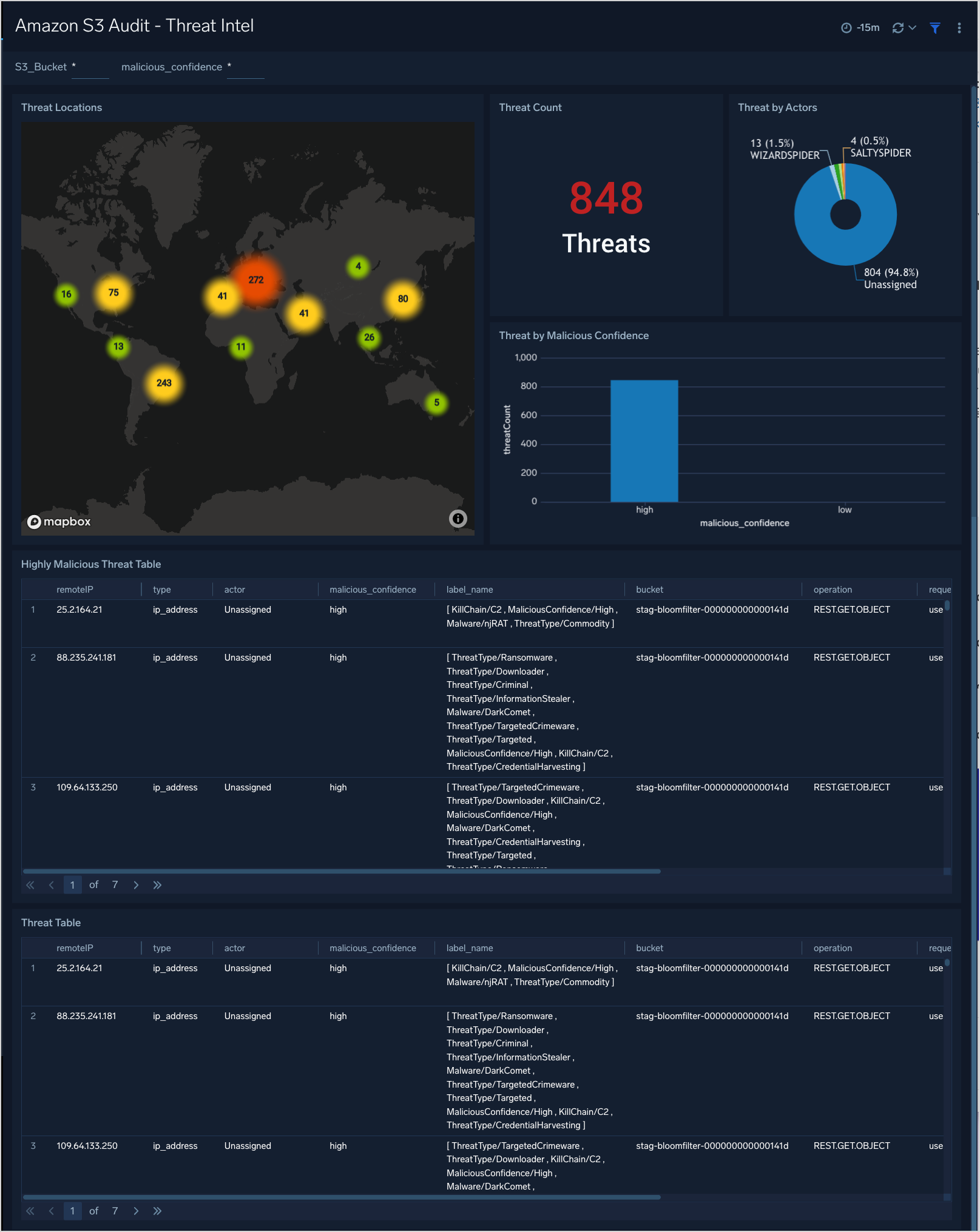

Threat Intel

This dashboard provides high-level views of threats throughout your S3 Service. Dashboard panels display visual graphs and detailed information on Threats by Client IP, Threats by Actors, and Threat by Malicious Confidence.

Upgrade/Downgrade the Amazon S3 Audit app (Optional)

To update the app, do the following:

Next-Gen App: To install or update the app, you must be an account administrator or a user with Manage Apps, Manage Monitors, Manage Fields, Manage Metric Rules, and Manage Collectors capabilities depending upon the different content types part of the app.

- Select App Catalog.

- In the Search Apps field, search for and then select your app.

Optionally, you can identify apps that can be upgraded in the Upgrade available section. - To upgrade the app, select Upgrade from the Manage dropdown.

- If the upgrade does not have any configuration or property changes, you will be redirected to the Preview & Done section.

- If the upgrade has any configuration or property changes, you will be redirected to the Setup Data page.

- In the Configure section of your respective app, complete the following fields.

- Field Name. If you already have collectors and sources set up, select the configured metadata field name (eg _sourcecategory) or specify other custom metadata (eg: _collector) along with its metadata Field Value.

- Click Next. You will be redirected to the Preview & Done section.

Post-update

Your upgraded app will be installed in the Installed Apps folder and dashboard panels will start to fill automatically.

See our Release Notes changelog for new updates in the app.

To revert the app to a previous version, do the following:

- Select App Catalog.

- In the Search Apps field, search for and then select your app.

- To version down the app, select Revert to < previous version of your app > from the Manage dropdown.

Uninstalling the Amazon S3 Audit app (Optional)

To uninstall the app, do the following:

- Select App Catalog.

- In the 🔎 Search Apps field, run a search for your desired app, then select it.

- Click Uninstall.